The Agentforce 360 Platform GA release is not an incremental update. It redraws the boundary between what Salesforce manages and what your architecture team owns. Getting that boundary wrong in the first 90 days will cost you 12 months of rework.

(A note on naming: Agentforce 360 Platform and Vibes are current as of the Spring ‘26 release notes; some sub-features may still be pre-GA in your org. Validate against your release schedule before treating any specific configuration as production-ready.)

The agentforce 360 platform enterprise deployment pattern is fundamentally different from anything Salesforce has shipped before because it collapses three previously separate concerns (agent orchestration, voice channel integration, and developer tooling) into a single governed surface. That sounds like simplification. In practice, it multiplies the number of architectural decisions you need to make before you write a single line of configuration.

Here is the blueprint that holds up at scale.

How Agentforce Builder Governance Controls Actually Work

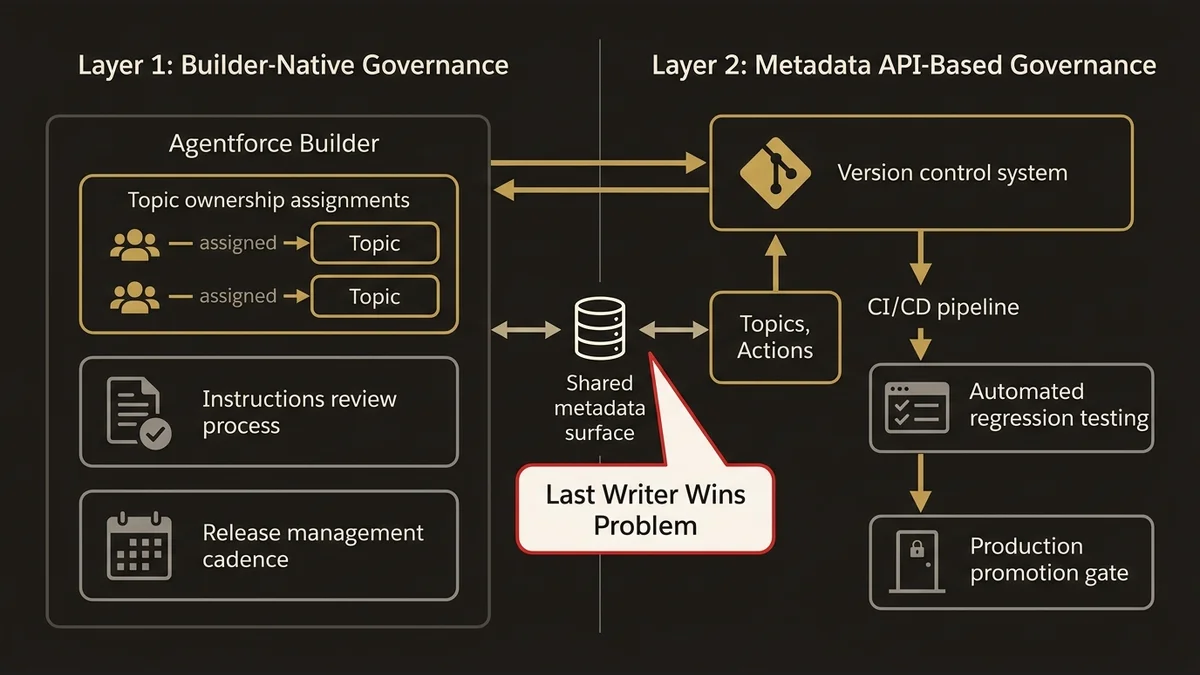

Agentforce Builder is the visual authoring environment for Topics, Actions, and Instructions. In isolation, it looks like a low-code tool. At enterprise scale, it is a governance problem.

The core issue is that Builder operates on a shared metadata surface. Any admin with the right permission set can modify a Topic’s scope or an Action’s grounding instructions without triggering a deployment pipeline. In orgs coordinating across 15+ business units, this is not a theoretical risk. It is the exact failure mode that produces agents giving contradictory answers across channels within the same week.

The architecture that works here is a two-layer governance model. The first layer is Builder-native: use Topic ownership assignments to map each Topic to a named team, and enforce Instructions review through a documented change process tied to your existing release management cadence. This is not glamorous, but it is the only way to prevent the “last writer wins” problem in a shared org.

The second layer is metadata API-based. Treat agent configuration as code. Pull Topic and Action metadata into your version control system on every change using CI/CD tooling, and gate promotion to production behind the same review process you use for Apex. The Agentforce Testing Center supports automated regression testing against defined conversation scenarios; use it as a quality gate, not an afterthought.

One specific pitfall: Instructions are free-text fields that the Atlas Reasoning Engine interprets at runtime. They are not validated at save time. An instruction that contradicts a Data Graph’s schema will fail silently in production and surface as degraded agent reasoning that is extremely difficult to trace back to its source. Treat Instructions with the same rigor you apply to SOQL queries in Apex triggers.

Voice IVR Integration Patterns for Agentforce 360

Voice is where the 360 Platform earns its name and where most enterprise deployments will struggle first.

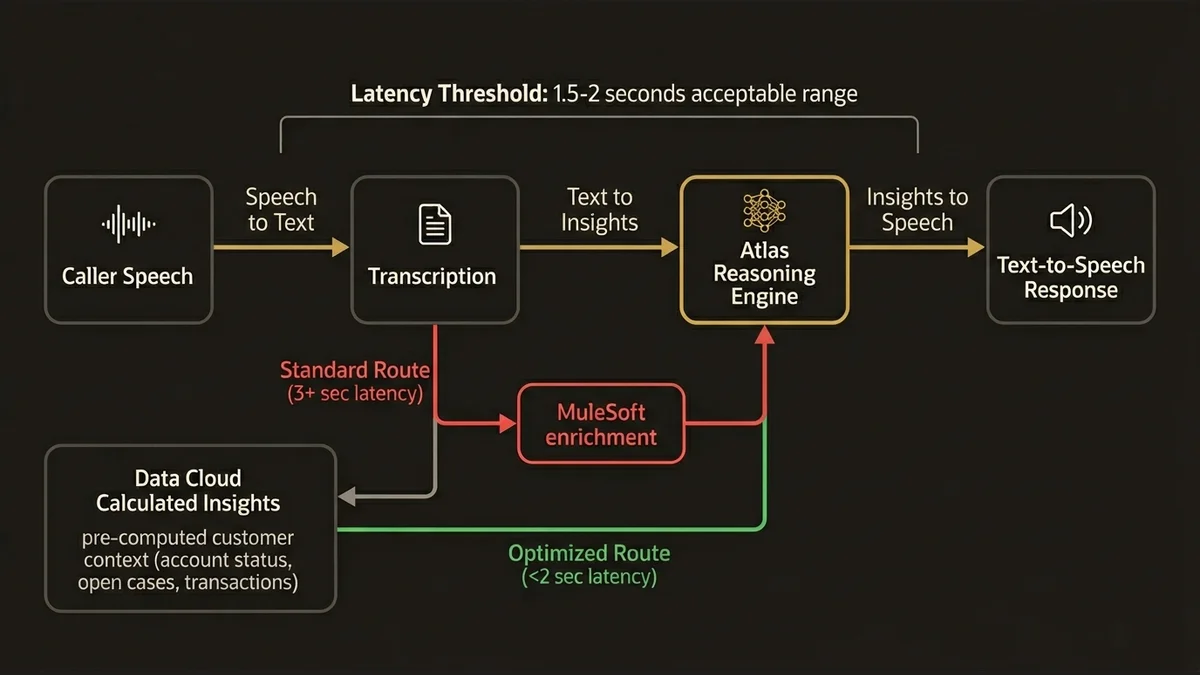

The integration pattern for Voice IVR connects Salesforce’s native Voice channel to the Atlas Reasoning Engine through a real-time transcription pipeline. The architectural implication is latency. The round-trip takes four steps: transcription, reasoning, response generation, and text-to-speech. Each step adds delay that is perceptible to callers. In practice, the acceptable threshold for IVR response latency is around 1.5 to 2 seconds. Architectures that route through MuleSoft for additional data enrichment before hitting the reasoning engine routinely exceed 3 seconds, which produces caller abandonment.

The pattern that keeps latency within bounds is pre-computation. Use Data Cloud Calculated Insights to materialize the customer context the agent will most likely need; account status, open cases, recent transactions; into a Data Graph that the reasoning engine can read without making additional callouts at inference time. This shifts the latency cost from the real-time call path to the batch computation window, where it is invisible to the caller.

For intent routing, resist the temptation to build a single monolithic Voice agent that handles all intents. The Atlas Reasoning Engine performs better with narrowly scoped Topics. A Voice agent handling billing inquiries should have a different Topic configuration than one handling technical support, even if they share underlying Actions. The routing logic between them belongs in Flow orchestration, not inside the agent’s Instructions.

Escalation to human agents deserves explicit architectural attention. The handoff must transfer the full conversation transcript and the agent’s reasoning trace to the receiving agent’s screen in under 500 milliseconds. That requires Platform Events wired to a Service Console component; not a screen flow, which introduces a page reload that breaks the experience.

Developer Pair-Programming Workflows with Agentforce 360

The developer experience in Agentforce 360 is built around the premise that developers and agents collaborate on code, not that agents replace developers. The architectural implication is that you need to design the collaboration surface deliberately.

The pair-programming workflow that works in practice treats the Agentforce coding assistant as a context-aware reviewer rather than a generator. Developers write the intent; the agent surfaces relevant Apex patterns, flags governor limit risks, and suggests test coverage gaps. This requires the agent to have accurate context about your org’s data model and coding conventions, which means investing in the grounding layer before the workflow delivers value.

Grounding for developer workflows comes from two sources: your org’s metadata (object schema, existing Apex classes, Flow definitions) and your documented standards (naming conventions, error handling patterns, test coverage requirements). The first source is available natively through Data Cloud Data Streams connected to your org’s metadata API. The second requires deliberate curation; you need to ingest your standards documentation into a grounding corpus and keep it current.

The governance question for developer pair-programming is different from the governance question for customer-facing agents. Customer-facing agents need tight Topic scoping to prevent hallucination. Developer-facing agents need broad access to org metadata but strict output validation; generated code should never be deployed without human review, and your CI/CD pipeline should enforce this with a mandatory review gate that cannot be bypassed even when the agent’s confidence score is high.

For orgs running large development teams across multiple time zones, the pair-programming workflow also surfaces a consistency problem. If different developers are using the agent to generate code against different versions of the grounding corpus, you get stylistic and structural drift across the codebase. Version-control your grounding corpus with the same discipline you apply to your codebase.

The honest position on developer pair-programming: the workflow only pays off after the grounding-corpus investment is real. Most orgs underestimate that investment by a factor of three. Below ~80 hours of curation per quarter on the standards corpus alone, the agent’s output drifts toward generic Apex patterns that ignore your conventions, and the velocity gain disappears. Above that threshold, the gains compound. Either fund the curation properly or skip the workflow until you can.

What the Vibes Security Layer Means for Your Architecture

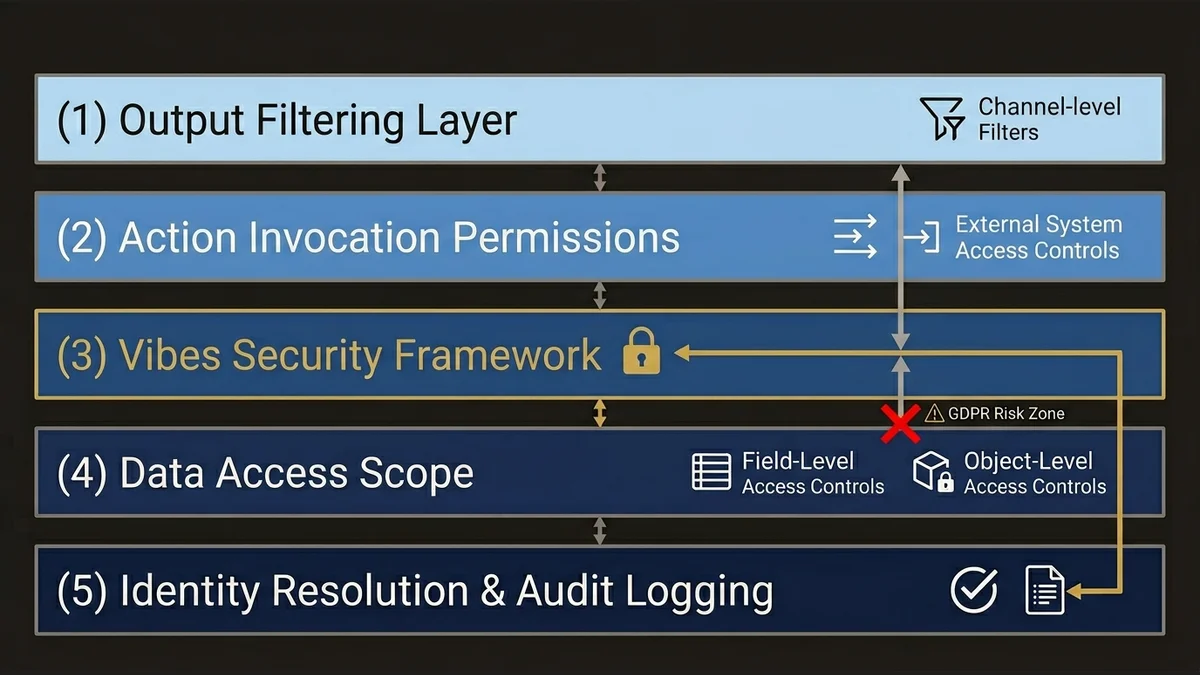

Vibes is the security framework embedded in Agentforce 360 that governs what agents can access, invoke, and return. It sits below the Topics and Actions layer and is largely invisible in Builder; which is exactly why most enterprise architects underestimate it until they hit a production incident.

The core Vibes model operates on three axes: data access scope, action invocation permissions, and output filtering. Data access scope determines which Data Model Objects and Data Graphs the reasoning engine can read during inference. Action invocation permissions determine which external systems an agent can call. Output filtering determines what the agent is permitted to return to the end user based on their profile and the channel they are using.

The architectural risk is misconfiguration at the intersection of these three axes. A common failure pattern: an agent is granted broad data access scope to support a complex use case, but output filtering is configured at the channel level rather than the data level. The result is an agent that can access sensitive fields and, under certain reasoning paths, surfaces them in responses even when the channel-level filter is supposed to prevent it.

This is not a hypothetical. It is the class of issue that produces GDPR exposure in European deployments.

The architecture that prevents this is defense-in-depth at the data layer. Configure Data Graph access at the field level, not the object level. Use Identity Resolution rulesets to ensure the agent is operating on a verified Unified Individual profile before accessing any personally identifiable data. And treat Vibes configuration as a security artifact; it belongs in your security review process alongside your sharing model and field-level security settings.

For regulated industries, the output filtering layer needs to be auditable. Vibes supports logging of agent decisions at the output filter level. Enable this from day one. Reconstructing what an agent returned to a specific user on a specific date is not possible after the fact if logging was not active at the time.

The forward-looking implication here is significant. As Agentforce 360 expands the surface area of what agents can access and invoke, the Vibes configuration will become as critical to your security posture as your sharing model. Orgs that treat it as a deployment checkbox rather than an ongoing governance artifact will find themselves in remediation cycles that are expensive and disruptive.

For a deeper look at how the Atlas Reasoning Engine’s architecture shapes these security boundaries, see Atlas Reasoning Engine: what the architecture doesn’t show you.

If you are evaluating how to structure the broader Data Cloud foundation that Agentforce 360 depends on, the architectural patterns are covered in detail at /services/data-cloud-architecture.

Key Takeaways

- Two governance layers for Builder. Native Topic ownership for team accountability, plus metadata API CI/CD to stop configuration drift in shared orgs.

- Voice IVR latency is the first thing to break. Pre-compute customer context into Data Graphs before the call path or you will not stay under 2 seconds.

- Developer pair-programming pays off only past a real grounding-corpus investment, not on day one.

- Vibes misconfiguration at the data-access × output-filtering intersection is the GDPR exposure path in European deployments. Field-level access controls, not object-level.

- Turn audit logging on day one. Retroactive reconstruction is impossible without it, and regulators will ask.

Need help with ai & agentforce architecture?

Design and implement Salesforce Agentforce agents, Prompt Builder templates, and AI-powered automation across Sales, Service, and Experience Cloud.

Related Articles

Agentforce Operations: Architecture Guide

Agentforce Operations redefines back-office automation. Here's the architectural blueprint for deterministic agent control planes at enterprise scale.

Salesforce Prompt Builder : bonnes pratiques

Prompt Builder mal configuré = agents Agentforce imprévisibles. Les bonnes pratiques architecturales pour éviter les pièges les plus coûteux.

Salesforce AI Specialist Cert Prep 2026

The Salesforce AI Specialist certification in 2026 tests architecture judgment, not recall. Here's how to prepare for what the exam actually measures.