Salesforce’s launch of Agentforce Operations signals something more disruptive than a feature release. It’s a structural invitation to rebuild back-office workflows around agent-native process models, and most enterprise architects are not ready for what that actually requires.

The agentforce operations architecture problem is not about enabling agents. It’s about control. Specifically, how do you maintain deterministic, auditable process outcomes when the execution layer is probabilistic by design? That tension is where most enterprise implementations will either succeed or collapse.

Why Back-Office Workflows Break Under Naive Agent Deployment

Front-office use cases, sales email drafting, case summarization, next-best-action nudges, are forgiving. A suboptimal output costs a rep thirty seconds. Back-office failures cost money, compliance standing, or customer trust at scale.

Order management, billing reconciliation, procurement approvals, workforce scheduling: these processes have hard constraints. SLAs with contractual teeth. Audit trails that regulators inspect. Downstream system dependencies that don’t tolerate ambiguous outputs. Deploying an agent into this environment without a deterministic control plane is architectural malpractice.

The naive pattern is to treat Agentforce as a smarter RPA layer. Give it Topics scoped to a business process, wire up a few Actions against your ERP, and assume the Atlas Reasoning Engine will figure out the rest. In practice, this produces an agent that handles the happy path adequately and fails unpredictably on edge cases, with no reliable mechanism to detect which outcome occurred.

The Deterministic Control Plane Pattern

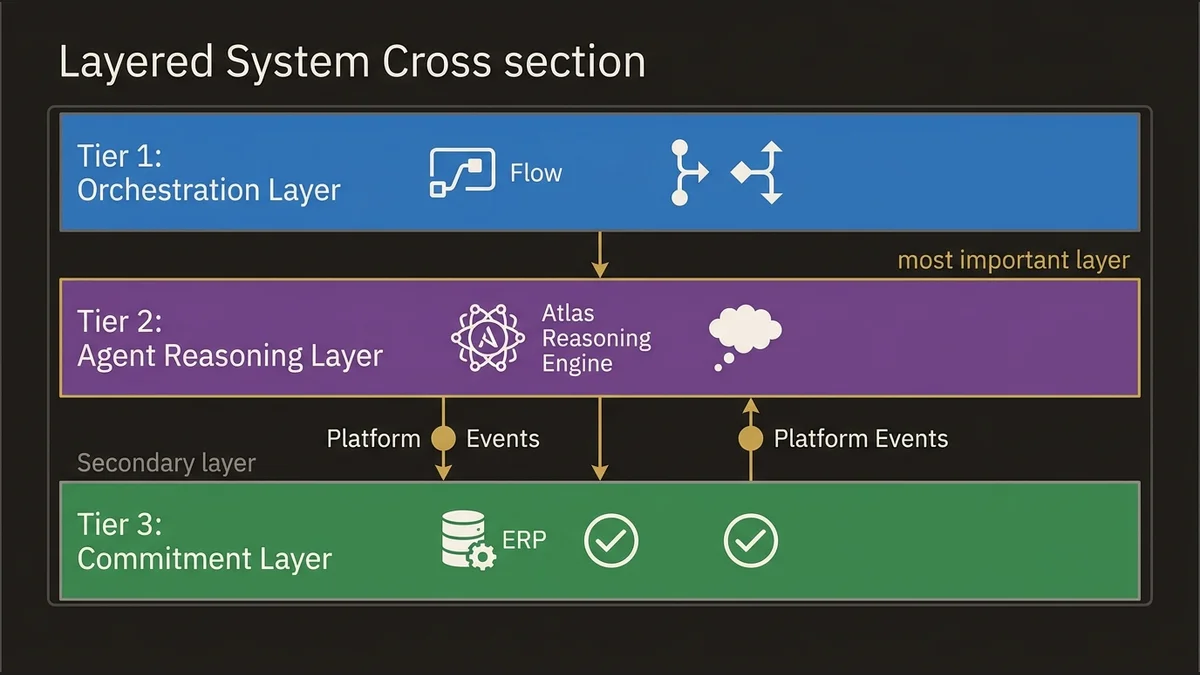

The architecture that works for enterprise back-office is a layered control plane that separates reasoning from execution, and execution from commitment.

Think of it in three tiers.

Tier 1: Orchestration Layer handles process routing and state management. Flow orchestration owns this tier. Specifically, autolaunched Flows that encode the process skeleton, branching logic, and escalation paths. The agent does not own the process; it operates within a process envelope that Flow defines. This is a critical inversion from how most teams initially design agent deployments.

Tier 2: Agent Reasoning Layer handles the cognitive work: interpreting unstructured inputs, resolving ambiguity, selecting among valid action paths, generating structured outputs. Atlas Reasoning Engine operates here. The key constraint is that this tier produces decisions, not commits. Every agent output is a candidate action, not an executed one.

Tier 3: Commitment Layer handles the actual state changes: writing to ERP, updating records, triggering downstream Platform Events, calling External Services. This tier executes only after the candidate action from Tier 2 passes validation gates defined in Tier 1.

The practical implementation uses Platform Events as the handoff mechanism between tiers. The agent emits a structured event payload; a subscriber Flow validates it against business rules, checks for required approvals, and either commits or routes to exception handling. This keeps the agent’s probabilistic reasoning completely isolated from your system-of-record writes.

[Trigger: Inbound Request]

|

[Flow: Process Envelope] --> defines valid action space

|

[Agentforce: Atlas Reasoning] --> produces candidate action

|

[Platform Event: CandidateAction__e]

|

[Flow: Validation + Commitment Gate]

|

[External Services / ERP Write / Record Update]This pattern adds latency. Accept that tradeoff. The alternative is an agent that occasionally writes corrupt state to your order management system at 2am with no human in the loop.

Data Flow Restructuring for Operational Agents

Back-office agents need a fundamentally different data architecture than front-office agents. Front-office agents primarily read context. Back-office agents read, write, and trigger downstream cascades.

The data flow restructuring has three components that most teams underinvest in.

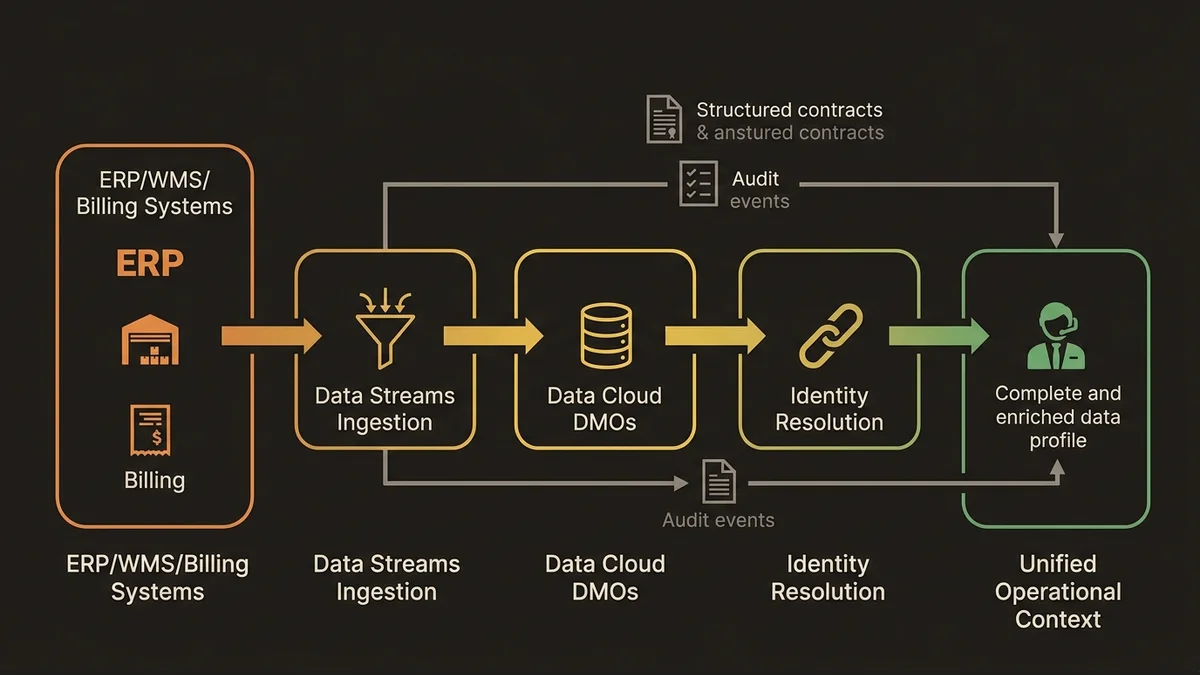

Unified Operational Context via Data Cloud. Agents making back-office decisions need a coherent view of operational state: open orders, inventory positions, contract terms, entitlements, SLA status. Without Data Cloud providing a unified operational profile through Data Graphs and Calculated Insights, agents are forced to make decisions from partial CRM data. The result is agents that approve refunds for orders already refunded, or escalate cases that were resolved in a system the agent couldn’t see.

Data Streams should ingest operational data from ERP, WMS, and billing systems into Data Cloud DMOs. Identity Resolution rulesets then link these operational records to the Unified Individual, giving the agent a complete picture before it reasons. This is not optional infrastructure for back-office use cases. It’s the foundation.

Structured Action Contracts. Every Action exposed to the agent needs a formal contract: typed inputs, typed outputs, explicit error states. Loose Action definitions that accept free-text parameters and return unstructured responses are fine for conversational agents. For back-office automation, they’re a liability. Define Actions with strict JSON schemas. Validate inputs at the Action boundary before any downstream call executes. Treat Action contracts the same way you’d treat API contracts in a microservices architecture.

Audit-First Event Architecture. Every agent decision, every Action invocation, every commitment gate outcome should emit a Platform Event that feeds an audit log. Not a Salesforce debug log. A structured, queryable audit record that compliance teams can interrogate. In regulated industries, this isn’t a nice-to-have; it’s the difference between passing an audit and explaining to regulators why your automated process has no decision trail.

(The data-cloud-agentforce-foundation-architecture article maps the full dependency model between Data Cloud and Agentforce for exactly this kind of operational context problem.)

Process Redesign Patterns That Actually Scale

Agentforce Operations is not a migration tool. You cannot take an existing process, replace the human steps with agent steps, and expect the result to be better. The processes that scale well with agent automation share specific structural characteristics.

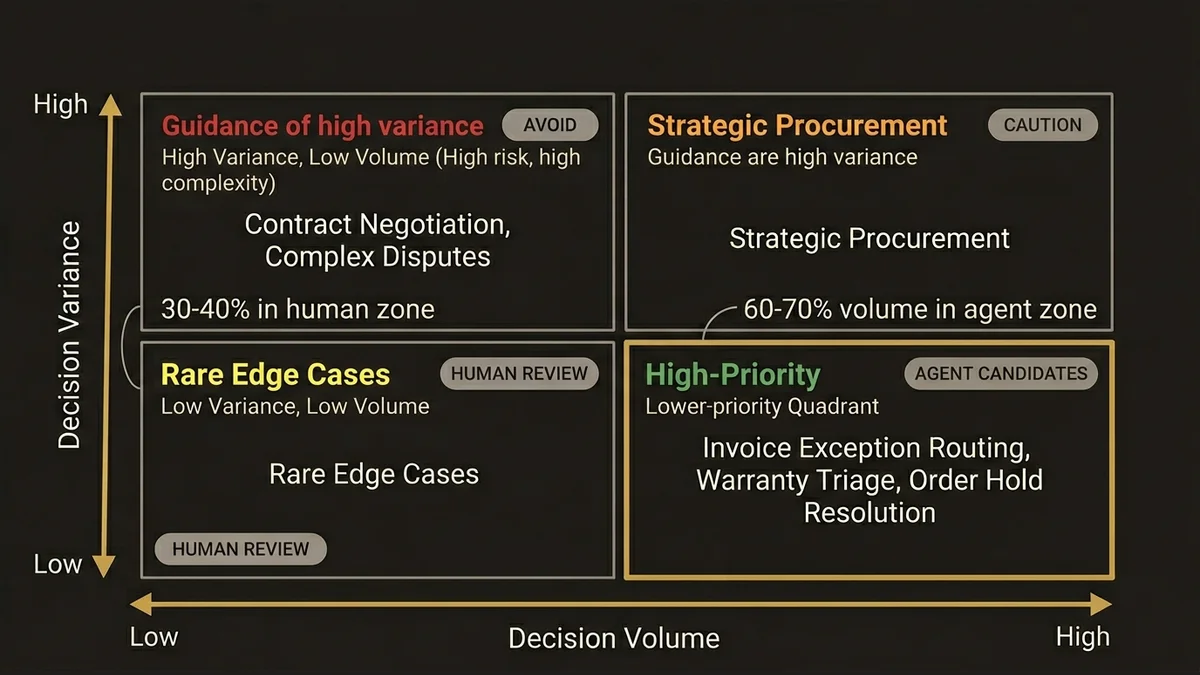

Processes with high decision volume and low decision variance are the best candidates. Invoice exception routing, warranty claim triage, order hold resolution: these involve thousands of decisions per day, but the decision logic is well-bounded. The agent handles volume; humans handle genuine exceptions.

Processes with high decision variance and low volume are the worst candidates. Contract negotiation, complex dispute resolution, strategic procurement: these require judgment that current agent architectures cannot reliably provide. Deploying agents here creates the illusion of automation while generating a hidden queue of agent-escalated exceptions that overwhelm the human review capacity you thought you’d eliminated.

The redesign pattern that works: map your process to a decision matrix. For each decision node, quantify variance (how many distinct valid outcomes exist?) and volume (how many instances per day?). High volume, low variance nodes are agent candidates. Everything else stays human or gets a human-in-the-loop gate.

At the scale of enterprise operations teams handling 50,000+ transactions per month, this analysis typically reveals that 60-70% of decision volume is concentrated in 20-30% of decision types. Those are your automation targets. The remaining 70-80% of decision types, representing 30-40% of volume, require human judgment and should be explicitly excluded from agent scope in your initial deployment.

Scalability Risks That Will Surface at Enterprise Volume

Three risks consistently emerge when Agentforce Operations deployments hit production volume.

Reasoning latency accumulation. Atlas Reasoning Engine adds latency at each reasoning step. A single-step agent action might add 1-3 seconds. A multi-step back-office process with 4-6 reasoning steps can accumulate 8-15 seconds of agent latency before the commitment layer executes. For synchronous processes where users or downstream systems are waiting, this is unacceptable. The mitigation is aggressive process decomposition: break multi-step processes into independent agent invocations that can run asynchronously, with Platform Events coordinating the handoffs.

Action failure cascade. When an agent Action fails mid-process, the default behavior without explicit error handling is an incomplete process state. In back-office contexts, partial execution is often worse than no execution. Every Action invocation needs explicit failure handling in the Flow envelope: compensating actions, rollback logic, or at minimum a guaranteed escalation path that prevents orphaned process instances.

Prompt injection via operational data. Back-office agents frequently process data from external systems: supplier invoices, customer-submitted forms, third-party logistics updates. Any of these can contain adversarial content designed to manipulate agent behavior. Prompt Builder templates for back-office agents need explicit input sanitization and strict grounding constraints. Agents should be configured to reject or flag inputs that contain instruction-like content outside expected data fields. This is an underappreciated attack surface in operational automation.

For teams building this out, the /services/agentforce-architecture work covers the full control plane design including failure mode analysis for high-volume operational deployments.

Key Takeaways

- Separate reasoning from commitment: the Atlas Reasoning Engine should produce candidate actions, never directly execute state changes in back-office processes.

- Data Cloud Data Graphs and Calculated Insights are prerequisite infrastructure for operational agents, not optional enhancements.

- Process redesign should target high-volume, low-variance decision nodes first; deploying agents against high-variance decisions generates hidden exception queues that eliminate the efficiency gains.

- Audit-first event architecture using Platform Events is non-negotiable in regulated industries and should be designed before the first agent Action is built.

- Reasoning latency accumulates across multi-step processes; design for asynchronous execution from the start, not as a retrofit when synchronous performance fails in production.

Need help with ai & agentforce architecture?

Design and implement Salesforce Agentforce agents, Prompt Builder templates, and AI-powered automation across Sales, Service, and Experience Cloud.

Related Articles

Salesforce Prompt Builder : bonnes pratiques

Prompt Builder mal configuré = agents Agentforce imprévisibles. Les bonnes pratiques architecturales pour éviter les pièges les plus coûteux.

Salesforce AI Specialist Cert Prep 2026

The Salesforce AI Specialist certification in 2026 tests architecture judgment, not recall. Here's how to prepare for what the exam actually measures.

Agentforce vs Einstein Bots: Migration Path

Einstein Bots are end-of-life. Here's the architectural migration path to Agentforce that avoids the most common data and logic traps.