Most Data Cloud implementations fail not at ingestion but at activation. The data cloud integration sales service cloud pattern looks straightforward on a whiteboard; unify profiles in Data Cloud, push insights back to CRM; but the architectural decisions made in the first sprint determine whether you end up with a genuinely unified customer view or an expensive data relay that nobody trusts.

The stakes are real. Sales reps working from stale or incomplete profiles make worse decisions. Service agents without full interaction history create friction at exactly the wrong moment. Getting this integration right is not a nice-to-have.

Why the Default Wiring Creates Problems

The out-of-the-box approach most teams reach for is bidirectional sync: ingest CRM objects into Data Cloud via the native Salesforce connector, run Identity Resolution, then surface Unified Individual attributes back to Sales and Service Cloud through Data Cloud-native actions or Calculated Insights.

That works at low volume. At scale; orgs with millions of contacts, high-frequency service interactions, or complex B2B account hierarchies; it breaks in predictable ways.

The first failure mode is Identity Resolution thrash. When Sales Cloud contact records update frequently (ownership changes, enrichment jobs, territory reassignments), the Identity Resolution rulesets re-evaluate constantly. If your matching rules are even slightly permissive, you get Unified Individual instability: profiles merging and splitting, downstream Calculated Insights recalculating, and activation payloads that contradict each other within the same hour. The fix is not tightening the ruleset arbitrarily; it is designing a stable anchor identity (typically the Contact ID or a verified email) and treating all other attributes as enrichment, not matching criteria.

The second failure mode is treating Data Streams as a real-time integration layer. They are not. Data Streams are ingestion pipelines with batch and near-real-time modes, but “near-real-time” in Data Cloud means minutes, not milliseconds. Service Cloud use cases that require sub-second context; live chat, phone deflection, next-best-action during an active case; cannot rely on Data Cloud activation latency. The architecture that works here separates the concerns: Data Cloud handles profile enrichment and segmentation (latency-tolerant), while Platform Events or direct CRM queries handle the real-time interaction layer.

The Activation Architecture That Actually Holds

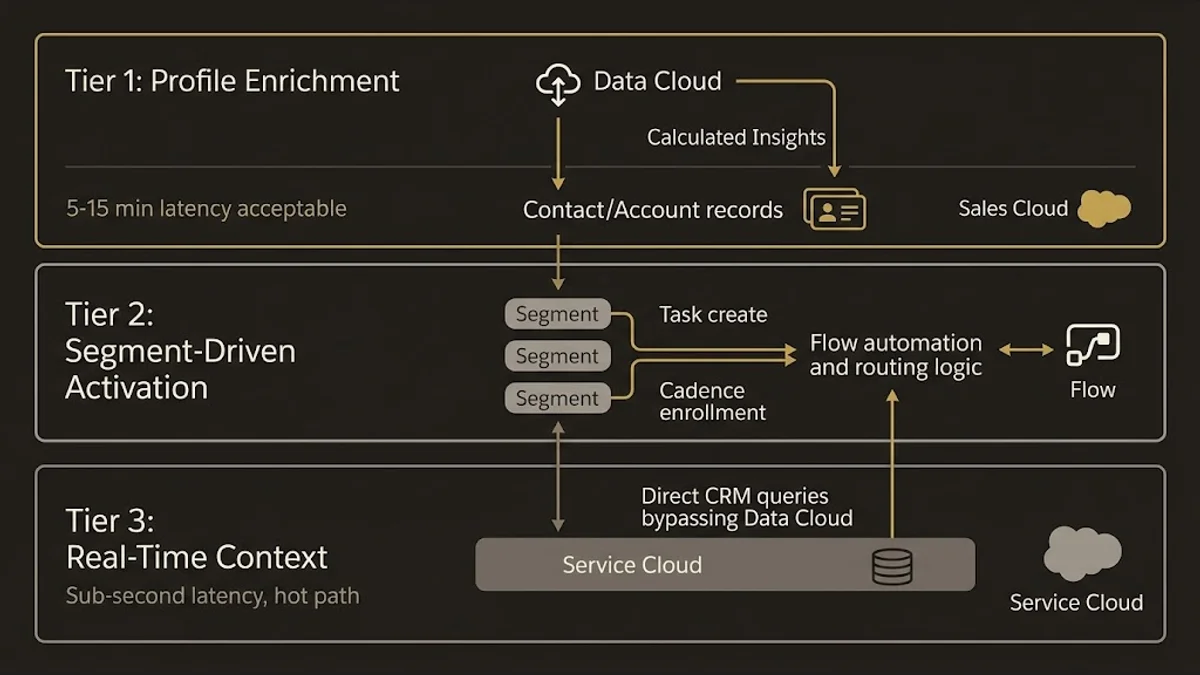

The pattern worth building toward is a layered activation model with three distinct tiers.

Tier 1: Profile enrichment. Data Cloud computes Calculated Insights; lifetime value, churn propensity, product affinity scores, engagement recency; and writes them back to the Unified Individual. These are then surfaced on the Contact or Account record in Sales Cloud via Data Cloud-connected fields. Latency here is acceptable at 5-15 minutes. Sales reps do not need a churn score updated to the second; they need it accurate and present when they open a record.

Tier 2: Segment-driven activation. Segments defined in Data Cloud drive list membership, campaign eligibility, and routing logic in Sales and Service Cloud. A contact entering a high-value renewal segment triggers a Flow in Sales Cloud that creates a task, updates a field, or enrolls the record in a cadence. This is where Data Cloud’s Activation framework connects to CRM automation; not through direct field writes but through segment membership events that Flow can react to.

Tier 3: Real-time context. For live service interactions, the architecture bypasses Data Cloud entirely for the hot path. Service Cloud’s Agentforce integration or a Flow-invoked Apex callout queries the CRM data model directly, pulling the most recent case history, open orders, and interaction log. Data Cloud-derived attributes (the enriched profile from Tier 1) are already on the Contact record and available without an additional callout. This keeps the real-time path clean and avoids introducing Data Cloud activation latency into a customer-facing interaction.

This three-tier separation is the architectural position worth defending. Teams that collapse all three tiers into a single Data Cloud activation flow end up with a system that is simultaneously too slow for real-time use cases and too noisy for batch enrichment.

Data Graph Design for Cross-Cloud Queries

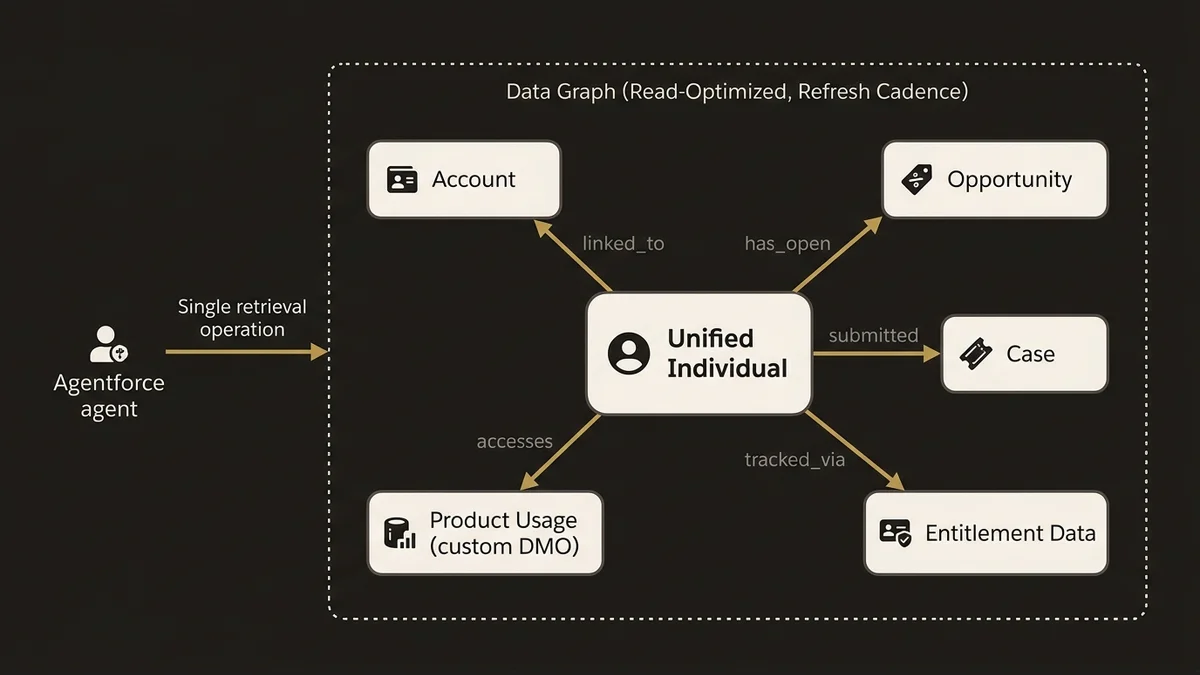

One underused capability in this integration pattern is Data Graphs. Most teams build their Data Cloud implementation around DMOs and Calculated Insights, then struggle when Agentforce or Prompt Builder needs to traverse relationships; contact to account to opportunity to case; in a single retrieval.

Data Graphs solve this by materializing pre-computed joins across DMOs into a queryable structure. For a Sales and Service Cloud integration, the practical design is a Data Graph anchored on the Unified Individual, with edges to Account, open Opportunities, recent Cases, and any custom DMOs representing product usage or entitlement data.

The payoff is significant when you connect this to Agentforce. The Atlas Reasoning Engine can retrieve a rich, pre-joined customer context from the Data Graph in a single operation rather than chaining multiple tool calls. For a service agent scenario, this means the agent surfaces account health, open cases, renewal date, and recent purchase history in one response; without the latency of sequential lookups.

The design constraint to respect: Data Graphs are read-optimized and have a refresh cadence. They are not a live query layer. Architect them for the retrieval patterns your agents and Prompt Builder templates actually need, not as a general-purpose graph of everything. Overly broad Data Graphs with dozens of edges become expensive to refresh and slow to query.

For a deeper look at how Data Cloud underpins Agentforce retrieval architecture, the data cloud agentforce foundation architecture article covers the specific design decisions around Data Graph scope and refresh strategy.

What Most Teams Get Wrong at the Service Cloud Boundary

Service Cloud introduces a specific integration challenge that Sales Cloud does not: case volume creates write pressure back into Data Cloud. Every case created, updated, or closed is a potential Data Stream event. At orgs handling tens of thousands of cases per day, this creates ingestion volume that strains the Data Stream pipeline and inflates Data Cloud storage consumption.

The architectural mistake is ingesting every case field update as a discrete event. The correct approach is event-level ingestion with field filtering: ingest case creation and case closure events with a defined payload (status, category, resolution code, linked contact), and handle intermediate updates through a separate, lower-priority stream or exclude them entirely if they do not affect the Unified Individual’s profile.

This matters for Calculated Insights accuracy. If your service engagement score is computed from case interactions, you want closed cases with resolution data, not every intermediate status change. Designing the Data Stream payload around the insight you need; rather than mirroring the full CRM object; keeps the pipeline clean and the Calculated Insights meaningful.

Another common failure at the Service Cloud boundary is assuming that Identity Resolution handles service contacts correctly. B2C service interactions often arrive with partial identity: a phone number, an email, a case contact name that does not match any existing Contact record. If your Identity Resolution rulesets are tuned for Sales Cloud’s relatively clean contact data, they will either fail to match these service interactions or create spurious Unified Individuals. The fix is a dedicated matching ruleset for service-originated records, with lower confidence thresholds and explicit fuzzy matching on phone and email, separate from the ruleset applied to Sales Cloud contacts.

Governance Decisions That Determine Long-Term Viability

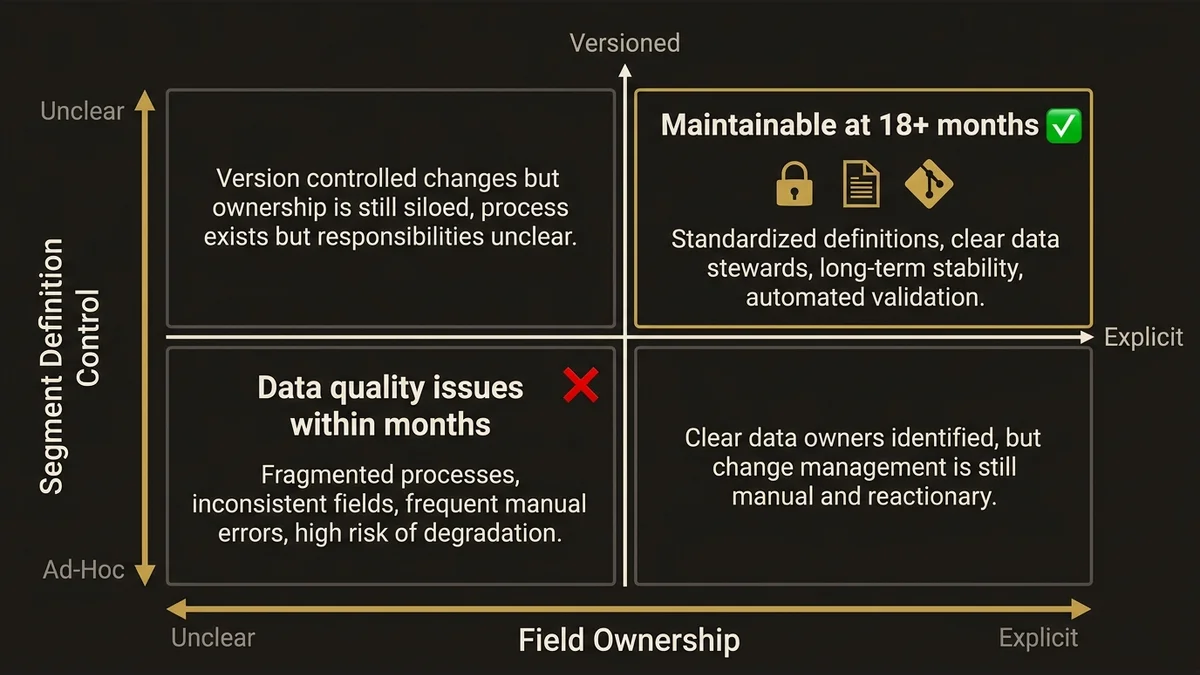

The integration pattern you build today will be maintained by people who were not in the room when you designed it. Two governance decisions have outsized impact on long-term viability.

First, field ownership. When a Calculated Insight writes a value back to a Contact field in Sales Cloud, who owns that field? If Sales ops can also write to it through a Flow or a manual update, you have a conflict that will surface as data quality issues within months. The architecture requires explicit field ownership: Data Cloud-derived fields are read-only in CRM, stamped with a last-updated timestamp, and never overwritten by CRM automation. This is a governance rule, not a technical constraint; enforce it through field-level security and documentation, not just good intentions.

Second, segment definition ownership. Segments in Data Cloud that drive Sales and Service Cloud automation are business logic encoded in a technical layer. When a segment definition changes; because a product manager updated the criteria; the downstream Flow behavior changes silently. The governance pattern that works is treating segment definitions as versioned artifacts with a change review process, not ad-hoc configurations. At orgs running 50+ active segments feeding CRM automation, undocumented segment changes are the most common source of “the system did something unexpected” incidents.

If your org is earlier in the journey and still assessing whether Data Cloud investment is justified before committing to this architecture, the is salesforce data cloud worth the investment article provides a framework for that evaluation.

For teams ready to move from pattern to implementation, the /services/data-cloud-architecture service page covers how this architecture gets scoped and delivered in practice.

Key Takeaways

- Identity Resolution stability depends on anchoring matching rulesets to a single verified identity attribute, not on tightening rules across all fields. Unstable Unified Individuals cascade into unreliable Calculated Insights and broken activations.

- Data Cloud is not a real-time integration layer. Activation latency of 5-15 minutes is the realistic baseline. Service Cloud real-time use cases require a separate hot path that reads from CRM directly, using Data Cloud-enriched fields already present on the record.

- Data Graphs are the correct retrieval primitive for Agentforce. Pre-computing joins across Unified Individual, Account, Opportunity, and Case DMOs eliminates sequential tool calls and reduces Atlas Reasoning Engine latency in service agent scenarios.

- Service Cloud write pressure requires explicit Data Stream design. Ingesting every case field update creates pipeline noise and inflates storage costs. Design streams around the events that affect profile-level insights, not full object mirroring.

- Field ownership and segment versioning are the governance decisions that determine whether this integration remains maintainable at 18 months. Both require explicit policy, not just architectural intent.

Need help with data 360 & multi-cloud architecture?

Unify customer data across Salesforce clouds with Data 360, build identity resolution models, and architect multi-cloud systems that actually work together.

Related Articles

Data Cloud Segmentation Strategy That Works

Most Data Cloud segmentation strategies fail before activation. Here's the architecture that prevents it, with specific patterns for enterprise orgs.

Data Cloud Consultant Certification Guide

The Salesforce Data Cloud consultant certification demands architectural depth most candidates underestimate. Here's what actually matters to pass.

Customer 360 Data Cloud Architecture Patterns

Most Customer 360 Data Cloud implementations fail at the data model layer. Here's the architecture that actually works at enterprise scale.